Introduction

Bela is an open-source embedded platform for real-time, ultra-low-latency audio and sensor processing on the BeagleBone Black. It was created by the Augmented Instruments Laboratory.

The Augmented Instruments Laboratory is part of the Centre for Digital Music (C4DM) at Queen Mary University of London. It focuses on developing new digital musical instruments, including those which extend the capabilities of familiar acoustic instruments.

Resources

- Bela: beautifully interactive sensors and sound

- An Environment for Submillisecond-Latency Audio and Sensor Processing on BeagleBone Black (Audio Engineering Society Convention Paper)

What is Bela?

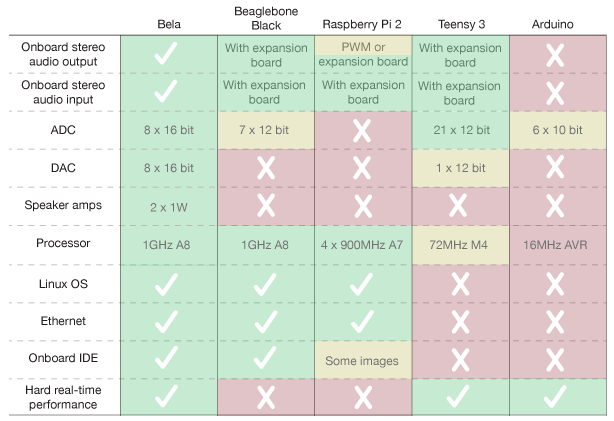

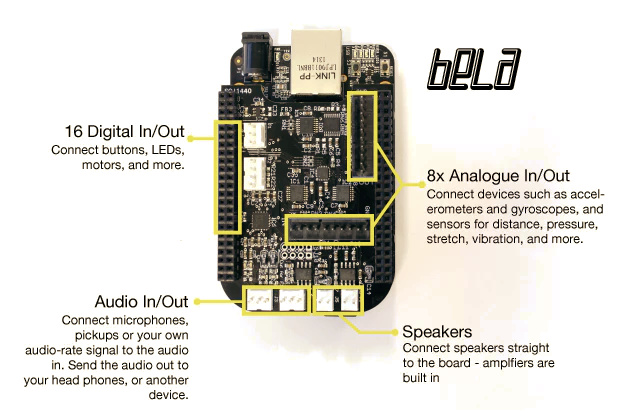

Bela is an embedded computing platform developed for high quality, ultra-low latency interactive audio. Bela provides stereo audio, analogue and digital I/O in a single self-contained package. It combines the processing power of the BeagleBone Black embedded computer with the timing precision and connectivity of a microcontroller.

Bela features an on-board, browser-based IDE , making it easy to get up and running without requiring any additional software. The end result: digital instruments and interactive objects that are faster to develop and more responsive to use.

Performance

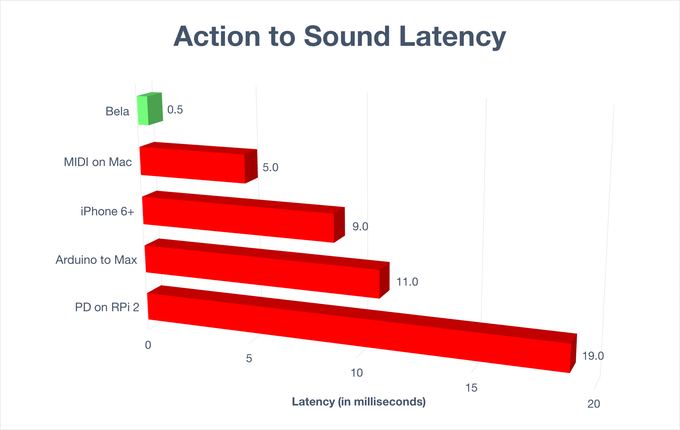

Bela is designed with audio in mind. It uses the BeagleBone Black single-board computer which features a 1GHz ARM Cortex-A8 processor and 512MB of RAM. It runs a custom Linux audio environment that gives you buffer sizes as small as 2 samples, producing latency as low as 1 millisecond from audio in to audio out, or even down to 100 microseconds from analogue in to analogue out. What’s more, every analog and digital pin is automatically sampled at audio rate, providing precise, jitter-free alignment between audio and sensors.

Bela combines the low-latency real-time performance of microcontrollers with the power and connectivity of embedded Linux computers. Through Bela’s dedicated design we can achieve audio latency lower than even a high-end laptop.

Development history

Back in 2016, they had a successful campaign on Kickstarter and iterated through the main goal developing the Bela Cape to the stretch goals of developing the Audio Expander Capelet and the Multiplexer Capelet.

Details

Hardware

See also

Software

Bela runs a custom audio processing environment based on the Xenomai real-time Linux extensions. Your audio code runs in hard real-time, bypassing the entire operating system to go straight to the hardware. We have written a custom audio driver using the Programmable Realtime Unit (PRU), a microcontroller on the same chip as the BeagleBone Black CPU. This driver is capable of buffer sizes as small as 2 audio samples for very low latency, and its performance is not affected by other system load. No other embedded Linux platform can match this performance.

The Bela C++ API gives you a clean and lightweight way to write audio code. Fill in three functions: setup() runs at the beginning; render() runs once for each new audio buffer; cleanup() runs once at the end. All the analogue and digital pins are sampled automatically at audio rate, so sensor signals can be treated the same way as audio in your code. You can write your code using the browser-based IDE, or alternatively a set of build scripts let you use an editor of your choice and then compile the code on the board.

Some pictures